MOLTWORKER USES A CONTAINER. I USED DURABLE OBJECTS.

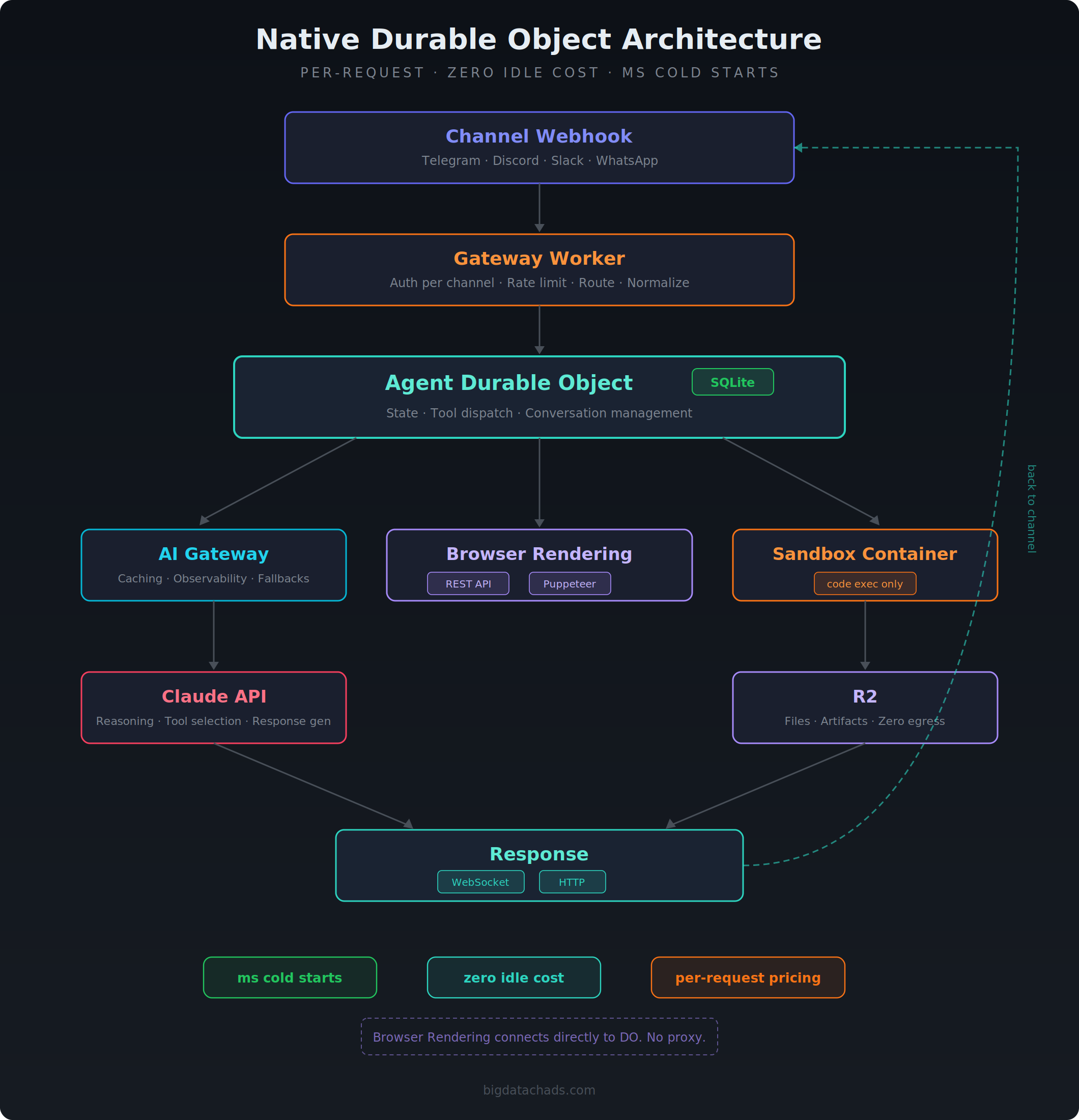

Cloudflare published a blog post about Moltworker, their adaptation of OpenClaw that runs inside a Sandbox container on Workers. I built a multichannel AI agent on Cloudflare. Different architecture. No container for the core runtime. Worth comparing the two approaches.

Moltworker takes an existing agent (OpenClaw) and wraps it in a Sandbox container with a Worker as a proxy. My approach builds the agent natively on Workers and Durable Objects, only using containers for code execution. Same platform, opposite design philosophies.

The architecture comparison

| Concern | Moltworker | Native DO Approach |

|---|---|---|

| Agent Runtime | Sandbox container (Node.js) | Durable Object (Workers runtime) |

| State Management | Filesystem + R2 mount + 5 min backup cron | SQLite in Durable Object (zero latency) |

| Message Routing | Worker proxy to container | Worker with channel adapters |

| Code Execution | Runs inside agent container (OpenClaw monolith) | Separate container via Sandbox SDK |

| Browser Automation | CDP proxy from container through Worker | REST API from DO (stateless) + Puppeteer in DO (stateful) |

| Cold Start | 1 to 2 minutes | Milliseconds |

The fundamental difference is where the agent logic lives. Moltworker puts everything in a container and uses Workers as a thin proxy. The native approach puts everything in Workers and Durable Objects, only reaching for containers when the agent needs to run untrusted code.

State management is the biggest gap

Moltworker stores state on the container filesystem. It mounts R2 as a filesystem partition via sandbox.mountBucket() and runs a cron job every five minutes to back up config data. Containers are ephemeral. When the container restarts between backup cycles, state from that window disappears.

# From Moltworker's README

# "A cron job runs every 5 minutes to sync the moltbot config to R2"

# "Without R2 credentials, moltbot still works but uses ephemeral storage

# (data lost on container restart)"The R2 backup is optional but recommended. Users who skip it lose everything on restart. Users who enable it still have a window of up to five minutes where conversation history, device pairings, and memory can vanish.

Durable Objects with SQLite solve this at the platform level.

// State persists automatically. No backup cron needed.

// SQLite lives on the Durable Object. Zero network latency.

async saveMessage(conversationId: string, message: Message): Promise<void> {

this.sql.exec(

`INSERT INTO messages (id, conversation_id, role, content, timestamp)

VALUES (?, ?, ?, ?, ?)`,

[message.id, conversationId, message.role, message.content, Date.now()]

);

}Seven SQLite tables handle conversations, messages, persistent memory, devices, pairing requests, scheduled tasks, and installed skills. Every write is durable immediately. No five minute window of potential data loss. No separate R2 bucket to configure. No backup scripts to maintain.

Cold starts matter for a personal agent

Moltworker’s README is upfront about this. First request takes one to two minutes while the container starts. Their recommendation is to keep the container running 24/7 to avoid it.

Running a container 24/7 costs roughly $34.50 per month according to their own estimates. That’s the $5 Workers plan plus $26 in memory, $2 in CPU, and $1.50 in disk.

A Durable Object cold starts in milliseconds. The agent runtime wakes instantly. The AI response adds seconds for inference, but the user isn’t waiting two minutes for infrastructure to boot. You pay per request. If nobody messages the agent for three hours, it costs nothing for those three hours. The DO wakes instantly when the next message arrives.

Moltworker always on: ~$34.50/month (infrastructure only)

Moltworker with sleep: ~$10-11/month (4 hours/day active)

Native DO infrastructure only: ~$0.50-2.00/month (per-request pricing)For a personal agent that handles maybe 50 conversations a day, the per request model wins by an order of magnitude.

Browser automation without the proxy

Moltworker’s blog post dedicates an entire section to their CDP proxy. They built a thin proxy layer that pipes Chrome DevTools Protocol commands from the Sandbox container through the Worker to Browser Rendering. Three hops. Container to Worker to headless browser and back.

My agent has two browser tools. The stateless tool calls the Cloudflare Browser Rendering REST API directly from the Durable Object. One authenticated fetch. No Puppeteer. No CDP. No container.

// One call. No proxy. No container.

const apiUrl = `https://api.cloudflare.com/client/v4/accounts/${env.CF_ACCOUNT_ID}/browser-rendering/markdown`;

const result = await fetch(apiUrl, {

method: 'POST',

headers: {

'Content-Type': 'application/json',

'Authorization': `Bearer ${env.CF_API_TOKEN}`,

},

body: JSON.stringify({ url }),

});Cloudflare spins up a headless browser, executes the action, returns the result. Each call is stateless and independent. Seven actions available including markdown extraction, screenshots stored to R2, structured data extraction via Workers AI, and PDF generation.

The stateful tool uses @cloudflare/puppeteer with puppeteer.launch(env.BROWSER) directly in the Durable Object. The browser session stays alive across multiple tool calls within a conversation. Login flows, multi step form submission, authenticated scraping. Twelve actions. The DO holds the session reference and cleans up on conversation end. No container involved.

Stateless (browser_render):

Agent DO → fetch() → Cloudflare REST API → headless Chrome → result

Stateful (browser_session):

Agent DO → puppeteer.launch(env.BROWSER) → persistent browser on CF edge

→ reuse across tool calls → close on conversation endCompare to Moltworker where every browser command routes from container to Worker to Browser Rendering. My stateless tool is one hop. My stateful tool connects directly from the DO to the browser instance.

What Moltworker does well

Credit where it matters. Moltworker solves a real problem. OpenClaw was designed to run locally. People were buying dedicated hardware for a personal assistant. Cloudflare showed you can skip the hardware purchase and run it on their platform for $35 a month instead of $500+ upfront.

The AI Gateway integration is worth noting. Swapping the ANTHROPIC_BASE_URL to point at AI Gateway with zero code changes is the kind of thing that makes a platform valuable. I use AI Gateway in my setup too. It’s the right abstraction for provider management.

What I would change about Moltworker

Move state out of the container. Use Durable Objects with SQLite for conversations, memory, and device pairings. Keep the container for code execution only. This eliminates the R2 backup cron entirely and makes cold starts a non issue for the core agent loop.

Split the container from the agent. The agent should respond in milliseconds via a Durable Object. When it needs to run code, it calls out to a Sandbox container. Two concerns, two runtimes. The container can sleep aggressively because it only wakes for heavy tasks.

Use the Browser Rendering REST API or Puppeteer binding directly. The CDP proxy adds complexity. Calling the REST API from a Worker or DO gives you stateless browser capabilities without routing commands through a container. For stateful sessions, puppeteer.launch(env.BROWSER) in a DO gives you persistent browser sessions with no proxy layer.

Cost

The entire architecture hibernates when unused. The DO uses the WebSocket Hibernation API. No browser runs when no browser tools are active. The container sleeps after five minutes of inactivity. The gateway is a stateless Worker. Pages serves static files on the free tier.

Per conversation with 10 message exchanges.

- 20 Worker requests (gateway + agent)

- 40 Durable Object operations

- ~10 to 20 AI Gateway calls (1+ per message, more with tool use)

- 0 container time (unless code execution requested)

- 0 browser time (unless browser tools requested)

Estimated per conversation. $0.02 to $0.08 infrastructure + AI provider cost.

Monthly comparison for a personal agent handling 50 conversations per day. All figures are estimates based on published pricing.

| Moltworker (always on) | Moltworker (4hr/day) | Native DO (estimated) | |

|---|---|---|---|

| Base plan | $5.00 | $5.00 | $5.00 |

| Container compute | $29.50 | $5.00 | $0.00 (on demand) |

| DO operations | $0.00 | $0.00 | ~$0.15 |

| R2 storage | ~$0.05 | ~$0.05 | ~$0.01 |

| AI provider (Claude) | varies by usage | varies by usage | varies by usage |

| Infrastructure total | ~$34.55 | ~$10.05 | ~$5.16 |

The AI provider cost dominates in both cases and varies heavily with token usage per call. But the infrastructure delta is significant. $29.50 for an always on container versus $0.15 for Durable Object operations. The native approach scales down to near zero when idle and scales up per request when active.

The point

Moltworker is a proof of concept and Cloudflare labels it as such. It proves OpenClaw can run on their platform. That’s valuable for the OpenClaw community.

But if you’re building an AI agent from scratch on Cloudflare, don’t put the whole runtime in a container. Use Durable Objects for state and conversation management. Use Workers for message routing. Hit the Browser Rendering API directly instead of proxying through a container. Use containers only for the things that actually need a full Linux environment.

Millisecond cold starts. Durable state without backup scripts. Zero idle cost. Per request pricing. These aren’t minor optimizations. They’re architectural advantages you get for free by building on the platform instead of running inside it.